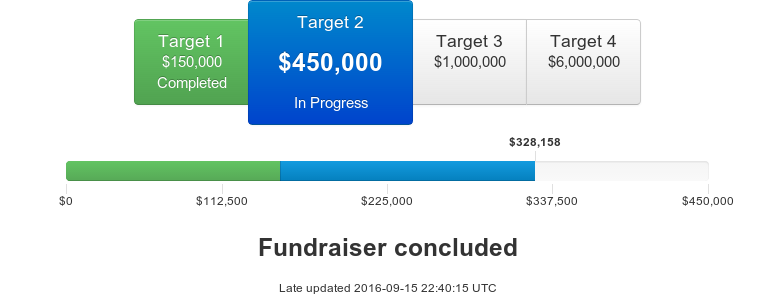

The Machine Intelligence Research Institute’s 2015 winter fundraising drive begins today, December 1! Our current progress:

The drive will run for the month of December, and will help support MIRI’s research efforts aimed at ensuring that smarter-than-human AI systems have a positive impact.

MIRI’s Research Focus

The field of AI has a goal of automating perception, reasoning, and decision-making — the many abilities we group under the label “intelligence.” Most leading researchers in AI expect our best AI algorithms to begin strongly outperforming humans this century in most cognitive tasks. In spite of this, relatively little time and effort has gone into trying to identify the technical prerequisites for making smarter-than-human AI systems safe and useful.

We believe that several basic theoretical questions will need to be answered in order to make advanced AI systems stable, transparent, and error-tolerant, and in order to specify correct goals for such systems. Our technical agenda describes what we think are the most important and tractable of these questions.

- High capability ceilings — Humans appear to be nowhere near physical limits for cognitive ability, and even modest advantages in intelligence may yield decisive strategic advantages for AI systems.

- “Sorcerer’s Apprentice” scenarios — Smarter AI systems can come up with increasingly creative ways to meet programmed goals. The harder it is to anticipate how a goal will be achieved, the harder it is to specify the correct goal.

- Convergent instrumental goals — By default, highly capable decision-makers are likely to have incentives to treat human operators adversarially.

- AI speedup effects — Progress in AI is likely to accelerate as AI systems approach human-level proficiency in skills like software engineering.

We think MIRI is well-positioned to make progress on these problems for four reasons: our initial technical results have been promising (see our publications), our methodology has a good track record of working in the past (see MIRI’s Approach), we have already had a significant influence on the debate about long-run AI outcomes (see Assessing Our Past and Potential Impact), and we have an exclusive focus on these issues (see What Sets MIRI Apart?). MIRI is currently the only organization specializing in long-term technical AI safety research, and our independence from industry and academia allows us to effectively address gaps in other institutions’ research efforts.

General Progress This Year

In June, Luke Muehlhauser left MIRI for a research position at the Open Philanthropy Project. I replaced Luke as MIRI’s Executive Director, and I’m happy to say that the transition has gone well. We’ve split our time between technical research and academic outreach, running a workshop series aimed at introducing a wider scientific audience to our work and sponsoring a three-week summer fellows program aimed at training skills required to do groundbreaking theoretical research.

Our fundraiser this summer was our biggest to date. We raised a total of $631,957 from 263 distinct donors, smashing our previous funding drive record by over $200,000. Medium-sized donors stepped up their game to help us hit our first two funding targets: many more donors gave between $5,000 and $50,000 than in past fundraisers. Our successful fundraisers, workshops, and fellows program have allowed us to ramp up our growth substantially, and have already led directly to several new researcher hires.

Three new full-time research fellows have joined our team this year: Patrick LaVictoire in March, Jessica Taylor in August, and Andrew Critch in September. Scott Garrabrant will become our newest research fellow this month, after having made major contributions as a workshop attendee and research associate.

Meanwhile, our two new research interns, Kaya Stechly and Rafael Cosman, have been going through old results and consolidating and polishing material into new papers; and three of our new research associates, Vanessa Kosoy, Abram Demski, and Tsvi Benson-Tilsen, have been producing a string of promising results on our research forum. Another intern, Jack Gallagher, contributed to our type theory project over the summer.

To accommodate our growing team, we’ve recently hired a new office manager, Andrew Lapinski-Barker, and will be moving into a larger office space this month. On the whole, I’m very pleased with our new academic collaborations, outreach efforts, and growth.

Research Progress This Year

As our research projects and collaborations have multiplied, we’ve made more use of online mechanisms for quick communication and feedback between researchers. In March, we launched the Intelligent Agent Foundations Forum, a discussion forum for AI alignment research. Many of our subsequent publications have been developed from material on the forum, beginning with Patrick LaVictoire’s “An introduction to Löb’s theorem in MIRI’s research.”

We have also produced a number of new papers in 2015 and, most importantly, arrived at new research insights.

In decision theory, Patrick LaVictoire and others have developed new results pertaining to bargaining and division of trade gains, using the proof-based decision theory framework (example). Meanwhile, the team has been developing a better understanding of the strengths and limitations of different approaches to decision theory, an effort spearheaded by Eliezer Yudkowsky, Benya Fallenstein, and me, culminating in some insights that will appear in a paper next year. Andrew Critch has proved some promising results about bounded versions of proof-based decision-makers, which will also appear in an upcoming paper. Additionally, we presented a shortened version of our overview paper at AGI-15.

In Vingean reflection, Benya Fallenstein and Research Associate Ramana Kumar collaborated on “Proof-producing reflection for HOL” (presented at ITP 2015) and have been working on an FLI-funded implementation of reflective reasoning in the HOL theorem prover. Separately, the reflective oracle framework has helped us gain a better understanding of what kinds of reflection are and are not possible, yielding some nice technical results and a few insights that seem promising. We also published the overview paper “Vingean reflection.”

Jessica Taylor, Benya Fallenstein, and Eliezer Yudkowsky have focused on error tolerance on and off throughout the year. We released Taylor’s “Quantilizers” (accepted to a workshop at AAAI-16) and presented the paper “Corrigibility” at a AAAI-15 workshop.

In value specification, we published the AAAI-15 workshop paper “Concept learning for safe autonomous AI” and the overview paper “The value learning problem.” With support from an FLI grant, Jessica Taylor is working on better formalizing subproblems in this area, and has recently begun writing up her thoughts on this subject on the research forum.

Lastly, in forecasting and strategy, we published “Formalizing convergent instrumental goals” (accepted to a AAAI-16 workshop) and two historical case studies: “The Asilomar Conference” and “Leó Szilárd and the danger of nuclear weapons.” Many other strategic analyses have been posted to the recently revamped AI Impacts site, where Katja Grace has been publishing research about patterns in technological development.

Fundraiser Targets and Future Plans

Like our last fundraiser, this will be a non-matching fundraiser with multiple funding targets our donors can choose between to help shape MIRI’s trajectory. Our successful summer fundraiser has helped determine how ambitious we’re making our plans; although we may still slow down or accelerate our growth based on our fundraising performance, our current plans assume a budget of roughly $1,825,000 per year.

Of this, about $100,000 is being paid for in 2016 through FLI grants, funded by Elon Musk and the Open Philanthropy Project. The rest depends on our fundraising and grant-writing success. We have a twelve-month runway as of January 1, which we would ideally like to extend.

Taking all of this into account, our winter funding targets are:

Target 1 — $150k: Holding steady. At this level, we would have enough funds to maintain our runway in early 2016 while continuing all current operations, including running workshops, writing papers, and attending conferences.

Target 2 — $450k: Maintaining MIRI’s growth rate. At this funding level, we would be much more confident that our new growth plans are sustainable, and we would be able to devote more attention to academic outreach. We would be able to spend less staff time on fundraising in the coming year, and might skip our summer fundraiser.

Target 3 — $1M: Bigger plans, faster growth. At this level, we would be able to substantially increase our recruiting efforts and take on new research projects. It would be evident that our donors’ support is stronger than we thought, and we would move to scale up our plans and growth rate accordingly.

Target 4 — $6M: A new MIRI. At this point, MIRI would become a qualitatively different organization. With this level of funding, we would be able to diversify our research initiatives and begin branching out from our current agenda into alternative angles of attack on the AI alignment problem.

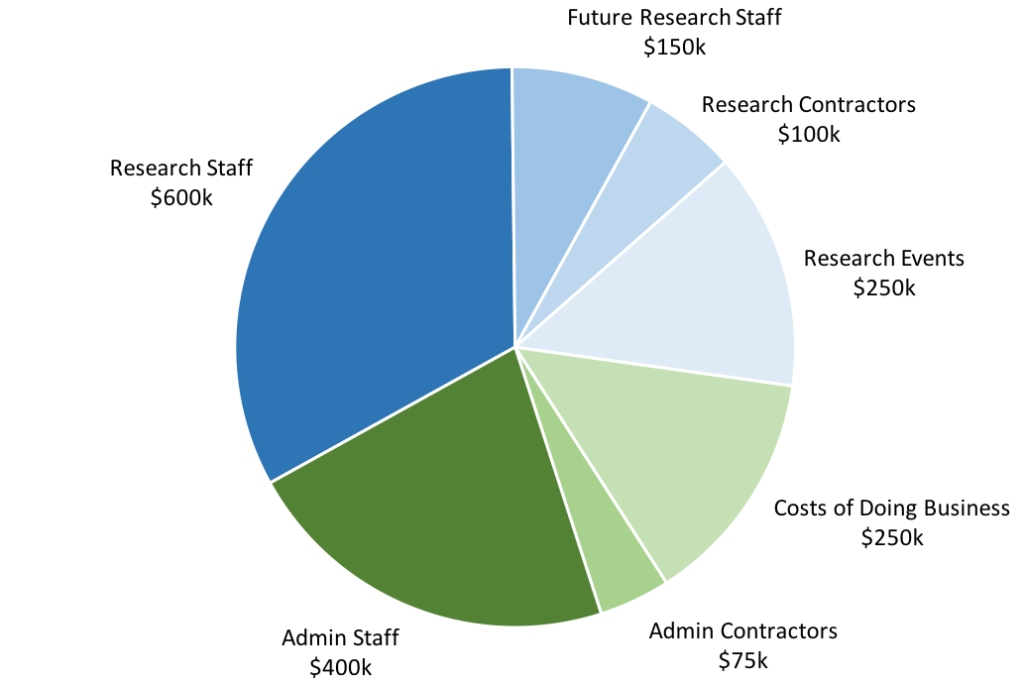

Our largest cost ($700,000) is in wages and benefits for existing research staff and contracted researchers, including research associates. Our current priority is to further expand the team. We expect to spend an additional $150,000 on salaries and benefits for new research staff in 2016, but that number could go up or down significantly depending on when new research fellows begin work:

- Mihály Bárász, who was originally slated to begin in November 2015, has delayed his start date due to unexpected personal circumstances. He plans to join the team in 2016.

- We are recruiting a specialist for our type theory in type theory project, which is aimed at developing simple programmatic models of reflective reasoners. Interest in this topic has been increasing recently, which is exciting; but the basic tools needed for our work are still missing. If you have programmer or mathematician friends who are interested in dependently typed programming languages and MIRI’s work, you can send them our application form.

- We are considering several other possible additions to the research team.

Much of the rest of our budget goes into fixed costs that will not need to grow much as we expand the research team. This includes $475,000 for administrator wages and benefits and $250,000 for costs of doing business. Our main cost of doing business is renting office space (slightly over $100,000).

Note that the boundaries between these categories are sometimes fuzzy. For example, my salary is included in the admin staff category, despite the fact that I spend some of my time on technical research (and hope to increase that amount in 2016).

Our remaining budget goes into organizing or sponsoring research events, such as fellows programs, MIRIx events, or workshops ($250,000). Some activities (e.g., traveling to conferences) are aimed at sharing our work with the larger academic community. Others, such as researcher retreats, are focused on solving open problems in our research agenda. After experimenting with different types of research staff retreat in 2015, we’re beginning to settle on a model that works well, and we’ll be running a number of retreats throughout 2016.

In past years, we’ve generally raised $1M per year, and spent a similar amount. Thanks to substantial recent increases in donor support, however, we’re in a position to scale up significantly.

Our donors blew us away with their support in our last fundraiser. If we can continue our fundraising and grant successes, we’ll be able to sustain our new budget and act on the unique opportunities outlined in Why Now Matters, helping set the agenda and build the formal tools for the young field of AI safety engineering. And if our donors keep stepping up their game, we believe we have the capacity to scale up our program even faster. We’re thrilled at this prospect, and we’re enormously grateful for your support.