Colloquium Series on Robust and Beneficial AI (CSRBAI)

Overview

From May 27 to June 17, 2016, the Machine Intelligence Research Institute (MIRI) and Oxford University’s Future of Humanity Institute (FHI) co-hosted a Colloquium Series on Robust and Beneficial AI at MIRI’s offices in Berkeley, California. This program brought together a variety of academics and professionals to address the technical challenges associated with AI robustness and reliability, with a goal of facilitating conversations between people interested in a number of different approaches.

Attendees worked to identify and collaborate on research projects aimed at ensuring AI is beneficial in the long run, with a focus on technical questions that appear tractable today. The series included lectures by selected speakers, open-ended discussions, and working groups on specific problems. Targeted workshops ran on weekends.

Participants attended one (or part of one) Talk and/or Workshop of their choosing. Attendance of the entire event was possible, though not required.

The program was free to attend. Food was provided, and accommodations and travel assistance were provided on a limited basis.

Venue

MIRI’s new offices in downtown Berkeley, California.

Schedule and Topics

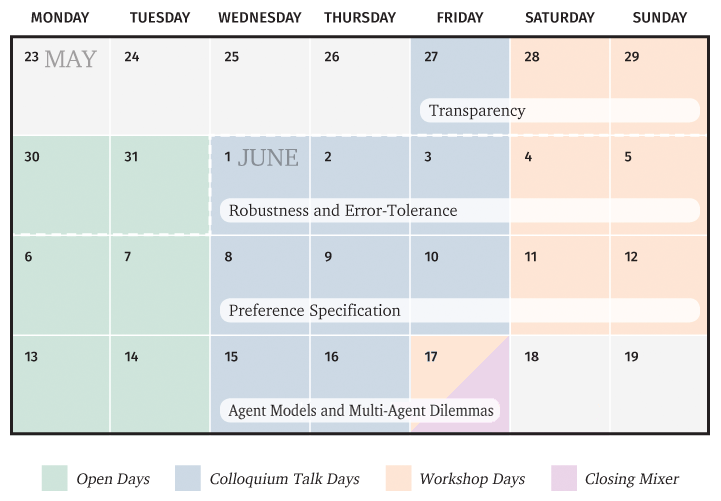

The entire program ran from Friday, May 27 to Saturday, June 17, 2016, ending the day before ICML. The program was broken down into four sections detailed below.

Daily Schedule

Every day the basic schedule is:

- 10:00am – Doors officially open.

- 11:00am – Day begins (first talk or workshop opening).

- 1:00pm – Lunch provided onsite.

- 6:00pm – Dinner provided onsite.

- 7:00pm – Doors officially close.

CSRBAI Week 1: Transparency

In many cases, it can be prohibitively difficult for humans to understand AI systems’ internal states and reasoning. This makes it more difficult to anticipate such systems’ behavior and correct errors. On the other hand, there have been striking advances in communicating the internals of some machine learning systems, and in formally verifying certain features of algorithms. We would like to see how far we can push the transparency of AI systems while maintaining their capabilities.

These topics are introduced in the first week but built on afterwards, as transparency is an important component of many approaches to robustness and error-tolerance.

Relevant topics include:

- Robust and Transparent Artificial Intelligence Via Anomaly Detection and Explanation, Tom Dietterich

- Formal verification and homotopy type theory

Scheduled events:

Event Kickoff and Colloquium Talks

Fri, May 27

Stuart Russell (UC Berkeley)

AI: The Story So Far – video, slides

Abstract: I will discuss the need for a fundamental reorientation of the field of AI towards provably beneficial systems. This need has been disputed by some, and I will consider their arguments. I will also discuss the technical challenges involved and some promising initial results.

Alan Fern (Oregon State University)

Toward Recognizing and Explaining Uncertainty – video, slides 1, slides 2

Francesca Rossi (IBM Research)

Moral Preferences – video, slides

Abstract: Intelligent systems are going to be more and more pervasive in our everyday lives. They will take care of elderly people and kids, they will drive for us, and they will suggest doctors how to cure a disease. However, we cannot let them do all this very useful and beneficial tasks if we don’t trust them. To build trust, we need to be sure that they act in a morally acceptable way. So it is important to understand how to embed moral values into intelligent machines. Existing preference modeling and reasoning framework can be a starting point, since they define priorities over actions, just like an ethical theory does. However, many more issues are involved when we mix preferences (that are at the core of decision making) and morality, both at the individual level and in a social context. I will discuss some of these issues as well as some possible solutions.

Workshop on Transparency

Sat/Sun, May 28-29

Tom Dietterich (Oregon State University)

Issues Concerning AI Transparency – slides

This workshop focused on the topic of transparency in AI systems, and how we can increase transparency while maintaining capabilities. This workshop explored these issues through informal presentations, small group collaborations, and regular regrouping and discussion.

CSRBAI Week 2: Robustness and Error-Tolerance

How can we ensure that when AI system fail, they fail gracefully and detectably? This is difficult for systems that must adapt to new or changing environments; standard PAC guarantees for machine learning systems fail to hold when the distribution of test data does not match the distribution of training data. Moreover, systems capable of means-end reasoning may have incentives to conceal failures that would result in their being shut down. We would much prefer to have methods of developing and validating AI systems such that any mistakes can be quickly noticed and corrected.

Relevant topics include:

- Robust Probabilistic Inference Engines for Autonomous Agents, Stefano Ermon

- Towards Safer Inductive Learning, Brian Ziebart

- Corrigibility, Stuart Russell and Patrick LaVictoire

- Counterfactual Human Oversight, Paul Christiano

Scheduled events:

Colloquium Talks

Wed, June 1

Stefano Ermon (Stanford)

Probabilistic Inference and Accuracy Guarantees – video, slides

Abstract: Statistical inference in high-dimensional probabilistic models is one of the central problems in AI. To date, only a handful of distinct methods have been developed, most notably (MCMC) sampling and variational methods. While often effective in practice, these techniques do not typically provide guarantees on the accuracy of the results. In this talk, I will present alternative approaches based on ideas from the theoretical computer science community. These approaches can leverage recent advances in combinatorial optimization and provide provable guarantees on the accuracy.

Thu, June 2

Paul Christiano (UC Berkeley)

Training an aligned RL agent – video

Jim Babcock

The AGI Containment Problem – video, slides

Abstract: Ensuring that powerful AGIs are safe will involve testing and experimenting on them, but a misbehaving AGI might try to tamper with its test environment to gain access to the internet or modify the results of tests. I will discuss the challenges of securing environments to test AGIs in. http://arxiv.org/abs/1604.00545

Fri, June 3

Bart Selman (Cornell University)

Non-Human Intelligence – video, slides

Jessica Taylor (MIRI)

Value Alignment for Advanced Machine Learning Systems – video

Abstract: If artificial general intelligence is developed using algorithms qualitatively similar to those of modern machine learning, how might we target the resulting system to safely accomplish useful goals in the world? I present a technical agenda for a new MIRI project focused on this question.

Workshop on Robustness and Error-Tolerance

Sat/Sun, June 4–5

This workshop focused on the topic of robustness and error-tolerance in AI systems, and how to ensure that when AI system fail, they fail gracefully and detectably. We want methods of developing and validating AI systems such that any mistakes can be quickly noticed and corrected. This workshop explored these issues through informal presentations, small group collaborations, and regular regrouping and discussion.

CSRBAI Week 3: Preference Specification

The perennial problem of wanting code to “do what I mean, not what I said” becomes increasingly challenging when systems may find unexpected ways to pursue a given goal. Highly capable AI systems thereby increase the difficulty of specifying safe and useful goals, or specifying safe and useful methods for learning human preferences.

Relevant topics include:

- Value Alignment and Moral Metareasoning, Stuart Russell

- Moral Preferences, Francesca Rossi

- Computational Ethics for Probabilistic Planning, Daniel Weld

- Inverse reinforcement learning / apprenticeship learning

- The Value Learning Problem, Nate Soares

Scheduled events:

Colloquium Talks

Wed, June 8

Dylan Hadfield-Menell (UC Berkeley)

The Off-Switch: Designing Corrigible, yet Functional, Artificial Agents – video, slides

Abstract: An artificial agent is corrigible if it accepts or assists in outside correction for its objectives. At a minimum, a corrigible agent should allow its programmers to turn it off. An artificial agent is functional if it is capable of performing non-trivial tasks. For example, a machine that immediately turns itself off is useless (except perhaps as a novelty item). In a standard reinforcement learning agent, incentives for these behaviors are essentially at odds. The agent will either want to be turned off, want to stay alive, or be indifferent between the two. Of these, indifference is the only safe and useful option but there is reason to believe that this is a strong condition on the agent’s incentives. In this talk, I will propose a design for a corrigible, yet functional, agent as the solution to a two-player cooperative game where the robot’s goal is to maximize the humans sum of rewards. We do an equilibrium analysis of the solutions to the game and identify three key properties. First, we show that if the human acts rationally, then the robot will be corrigible. Second, we show that if the robot has no uncertainty about human preferences, then the robot will be incorrigible or non-function if the human is even slightly suboptimal. Finally, we analyze the Gaussian setting and characterize the necessary and sufficient conditions, as a function of the robot’s belief about human preferences and the degree of human irrationality, to ensure that the robot will be corrigible and functional.

Thu, June 9

Bas Steunebrink (The Swiss AI Lab IDSIA)

About Understanding, Meaning, and Values – video, slides

Abstract: We will discuss ongoing research into value learning: how an agent can gradually learn to understand the world it’s in, learn to understand what we mean for it to do, learn to understand as well as be compelled to adhere to proper values, and learn to do so robustly in the face of inaccurate, inconsistent, and incomplete information as well as underspecified, conflicting, and updatable goals. To fulfill this ambitious vision we have a long road of gradual teaching and testing ahead of us.

Jan Leike (Future of Humanity Institute)

General Reinforcement Learning – video, slides

Abstract: General reinforcement learning (GRL) is the theory of agents acting in unknown environments that are non-Markov, non-ergodic, and only partially observable. GRL can serve as a model for strong AI and has been used extensively to investigate questions related to AI safety. Our focus is not on practical algorithms, but rather on the fundamental underlying problems: How do we balance exploration and exploitation? How do we explore optimally? When is an agent optimal? We outline current shortcomings of the model and point to future research directions.

Fri, June 10

Tom Everitt (Australian National University)

Avoiding Wireheading with Value Reinforcement Learning – video, slides

Abstract: How can we design good goals for arbitrarily intelligent agents? Reinforcement learning (RL) may seem like a natural approach. Unfortunately, RL does not work well for generally intelligent agents, as RL agents are incentivised to shortcut the reward sensor for maximum reward — the so-called wireheading problem. In this paper we suggest an alternative to RL called value reinforcement learning (VRL). In VRL, agents use the reward signal to learn a utility function. The VRL setup allows us to remove the incentive to wirehead by placing a constraint on the agent’s actions. The constraint is defined in terms of the agent’s belief distributions, and does not require an explicit specification of which actions constitute wireheading. Our VRL agent offers the ease of control of RL agents and avoids the incentive for wireheading. https://arxiv.org/abs/1605.03143

Jaan Altosaar (Princeton & Columbia)

f-Proximity Variational Inference

Abstract: Variational inference is a popular method for approximate posterior inference. However, if the parameters are initialized poorly, this method can suffer from pathologies and ‘turn off’ parts of the model. We remedy this by developing a general framework for constraining model parameters through constraints that can be any function of the parameters. We derive a scalable variant that runs as fast as variational inference. In our experiments, we show our approach is less sensitive to initialization and can increase diversity of the posterior across data for models with both discrete and continuous variables. In variational autoencoders (models that use neural networks to scale Bayesian inference) we improve usage of model capacity and counterintuitively demonstrate that this does not lead to better performance.

Workshop on Preference Specification

Sat/Sun, June 11–12

This workshop focused on the topic of preference specification for highly capable AI systems. This workshop explored these issues through informal presentations, small group collaborations, and regular regrouping and discussion.

CSRBAI Week 4: Agent Models and Multi-Agent Dilemmas

When designing an agent to behave well in its environment, it is risky to ignore the effects of the agent’s own actions on the environment or on other agents within the environment. For example, a spam classifier in wide use may cause changes in the distribution of data it receives, as adversarial spammers attempt to bypass the classifier. Considerations from game theory, decision theory, and economics become increasingly useful in such cases.

Relevant topics include:

- Adversarial games and cybersecurity

- Multi-agent coordination

- Economic models of AI interactions

Scheduled events:

Colloquium Talks

Wed, June 15

Michael Wellman (University of Michigan)

Autonomous Agents in Financial Markets: Implications and Risks – video, slides

Abstract: Design for robust and beneficial AI is a topic for the future, but also of more immediate concern for the leading edge of autonomous agents emerging in many domains today. One area where AI is already ubiquitous is on financial markets, where a large fraction of trading is routinely initiated and conducted by algorithms. Models and observational studies have given us some insight on the implications of AI traders for market performance and stability. Design and regulation of market environments given the presence of AIs may also yield lessons for dealing with autonomous agents more generally.

Stefano Albrecht (UT Austin)

Learning to distinguish between belief and truth – video, slides

Abstract: Intelligent agents routinely build models of other agents to facilitate the planning of their own actions. Sophisticated agents may also maintain beliefs over a set of alternative models. Unfortunately, these methods usually do not check the validity of their models during the interaction. Hence, an agent may learn and use incorrect models without ever realising it. In this talk, I will argue that robust agents should have both abilities: to construct models of other agents and contemplate the correctness of their models. I will present a method for behavioural hypothesis testing along with some experimental results. The talk will conclude with open problems and a possible research agenda.

Thu, June 16

Stuart Armstrong (Future of Humanity Institute, Oxford University)

Reduced impact AI and other alternatives to friendliness – video, slides

Abstract: This talk will look at some of the ideas developed to create safe AI without solving the problem of friendliness. It will focus first on “reduced impact AI”, AIs designed to have little effect on the world – but from whom high impact can nevertheless be extracted. It will then delve into the new idea of AIs designed to have preferences over their own virtual worlds only, and look at the advantages – and limitations – of using indifference as a tool of AI control.

Andrew Critch (MIRI)

Robust cooperation of bounded agents – video

Abstract: The first interaction between a pair of agents who might destroy each other can resemble a one-shot prisoner’s dilemma. Consider such a game where each player is an algorithm with read-access to its opponent’s source code. Tennenholtz (2004) introduced an agent which cooperates iff its opponent’s source code is identical to its own, thus sometimes achieving mutual cooperation while remaining unexploitable in general. However, precise equality of programs is a fragile cooperative criterion. Here, I will exhibit a new and more robust cooperative criterion, inspired by ideas of LaVictoire, Barasz and others (2014), using a new theorem in provability logic for bounded reasoners.

Workshop on Agent Models and Multi-Agent Dilemmas

Fri, June 17

This workshop focused on the topics of designing agents that behave well in their environments, without ignoring the effects of the agent’s own actions on the environment or on other agents within the environment. This workshop explored these issues through informal presentations, small group collaborations, and regular regrouping and discussion.

Dinner and Closing Mixer

Format

Colloquium Talk Days

Colloquium talk days feature between one and three talks, beginning at 11:00 am, 12:00 pm, and 2:00 pm. Talks will range from 20 minutes in length to 55 minutes, as needed for the topic, with remaining time devoted to discussion, Q&As, and breaks. The remaining time in the afternoon is left unstructured.

Workshops

Weekend workshops focus on working in small groups to add to the frontier of knowledge and begin future collaborations (as opposed to presenting existing research). Each workshop begins with some short opening talks, then participants will assemble an agenda of topics for discussion and investigation in smaller subgroups. These subgroups are provisional and fluid; the goal is for people to work together effectively on topics of common interest.

Open Days

Mondays and Tuesdays are mostly unscheduled, and can be used in whatever way attendees find useful. There will be lots of room for free-form conversation, in a space with a number of breakout rooms and whiteboards available.

Apply Now

Applications to attend this event are now closed.

Information for Participants

General visitor information may be found at intelligence.org/visitors/.

Cost

The program is free to attend. Food is be provided, and accommodations and travel assistance are provided on a limited basis.

Accommodations

Accommodations are provided for attendees, as available, at a hotel in downtown Berkeley a block away from the MIRI offices.

Travel

Flight and travel expenses will be reimbursed for select attendees. Attendees will be responsible for booking their travel. Send receipts to receipts@intelligence.org, along with your preferred method of reimbursement (PayPal, ACH, or check).

International Participants

Attendees are provided with a Letter of Invitation for use when entering the United States.